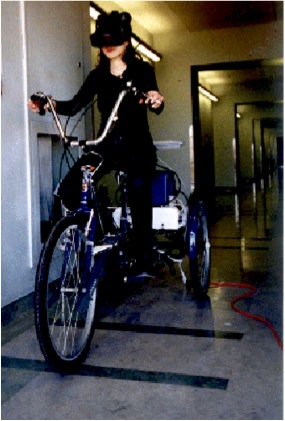

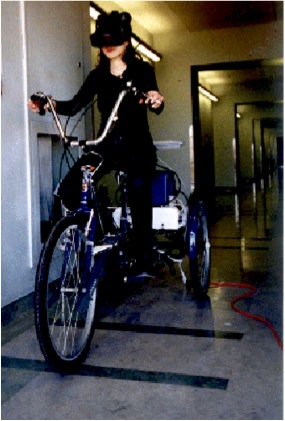

When simulating self-motion, virtual reality designers ignore non-visual cues at their peril. But providing non-visual cues presents significant challenges. One approach is to accompany visual displays with corresponding real physical motion to stimulate the non-visual, motion-detecting sensory systems in a natural way. However, allowing real movement requires real space and it is difficult to provide virtual environments that are as large physically as they are visually. In the late 1990's we built a virtual reality tricycle to support studies of visual-vestibular integration during real and simulated motion. Limited only by the available free space, subjects can explore large virtual environments and obtain appropriate visual and non-visual cues to their motion.